5. Privacy-preserving Data Synthesis

While synthetic data attempts to break direct one-to-one mappings between real and synthetic records, its “synthetic” label alone does not guarantee privacy. Like any AI/ML model, a generator—also called a synthesizer—learns patterns from real data, and under certain conditions, those patterns can leak sensitive or identifiable information.

A context-driven privacy evaluation is therefore essential before sharing or using synthetic data in sensitive scenarios. Privacy risks should be assessed using complementary methods, each providing a different lens on potential leakage.

1. Empirical Privacy Risk Assessment: Smoke Testing

Section titled “1. Empirical Privacy Risk Assessment: Smoke Testing”Empirical checks are a quick way to flag potential privacy leaks—situations where synthetic data reveals information about real individuals from the real dataset. These tests look for signs that the synthesizer memorized specific real records instead of learning general patterns.

What is a privacy leak? When synthetic data contains information that could identify or reveal sensitive details about real people from the real dataset. For e.g., if a synthetic patient record is nearly identical to a real patient’s record, it could expose that person’s medical information.

Common “smoke tests” include:

-

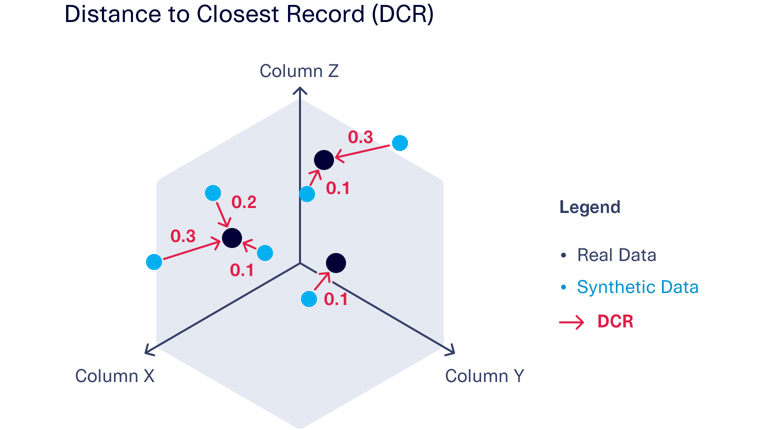

Distance-based measures (similarity/proximity checks): Compare synthetic records directly against the real dataset to find close matches. If many synthetic records closely resemble real ones, this suggests the generator copied rather than learned patterns.

-

Duplicate detection: Check if any synthetic records are exact copies or near-duplicates of real records. Exact matches are clear privacy violations.

-

Outlier detection: Identify unusual synthetic records (rare combinations of attributes) and check if they match real outliers. Copying rare cases is particularly risky for privacy.

Figure 1: Example of distance-based privacy evaluation using DCR (distance

to closest record) measurements to evaluate the overall distance between

real and synthetic data. For every point of synthetic data (blue), the

closest point of real data (black) is identified. The DCR (red line) is

the distance to that real data point. In this example, there are three

columns of data (X, Y and Z); the same DCR metric can be applied to any

number of columns.

Image reference:

SDV DCRBaselineProtection

2. Attack-Based Evaluation: Adversarial Testing

Section titled “2. Attack-Based Evaluation: Adversarial Testing”Attack-based evaluations assess privacy risks by testing how vulnerable or safe synthetic data is to privacy attacks. Before interpreting attack results, consider your threat model: what are the attacker’s goals, background knowledge, and available resources?

Common “adversarial tests” include:

-

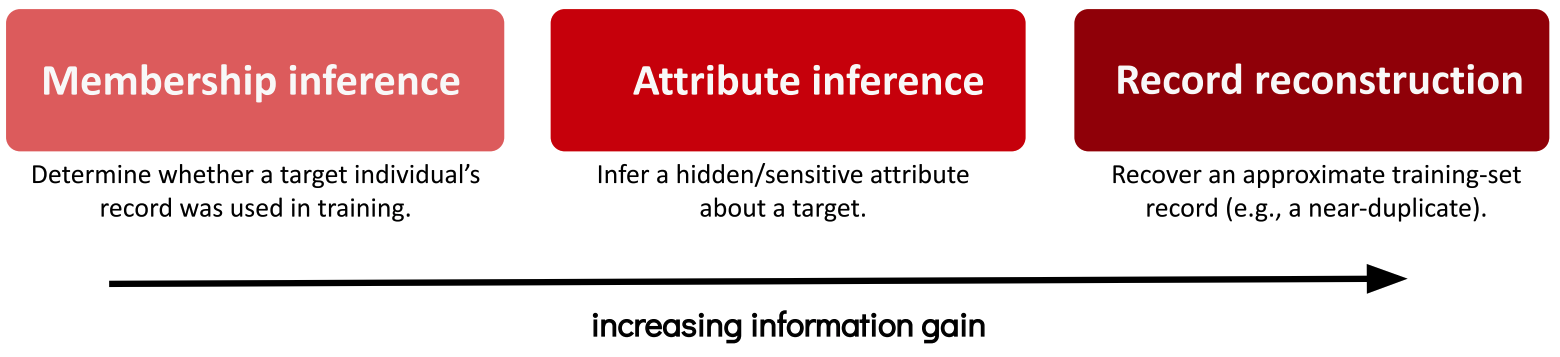

Membership inference: Determine whether a target individual’s record was used in training by probing model outputs or analyzing the synthetic release.1

-

Attribute inference (sensitive-attribute disclosure): Infer a hidden/sensitive attribute about a target using patterns learned by the synthesizer or models trained on the synthetic data.

-

Record reconstruction: Recover an approximate training-set record (e.g., a near-duplicate) from model behavior or the synthetic dataset.

Figure 2: Privacy attack goals against synthetic data arranged along an

information-gain spectrum: membership inference, attribute inference, and

record reconstruction.

Attack-based testing2 provides useful risk signals because it works under realistic attacker assumptions, but it must be framed within a defined threat model to avoid over- or underestimating risk.

3. Differential Privacy (DP): Formal Guarantees

Section titled “3. Differential Privacy (DP): Formal Guarantees”Differentially private

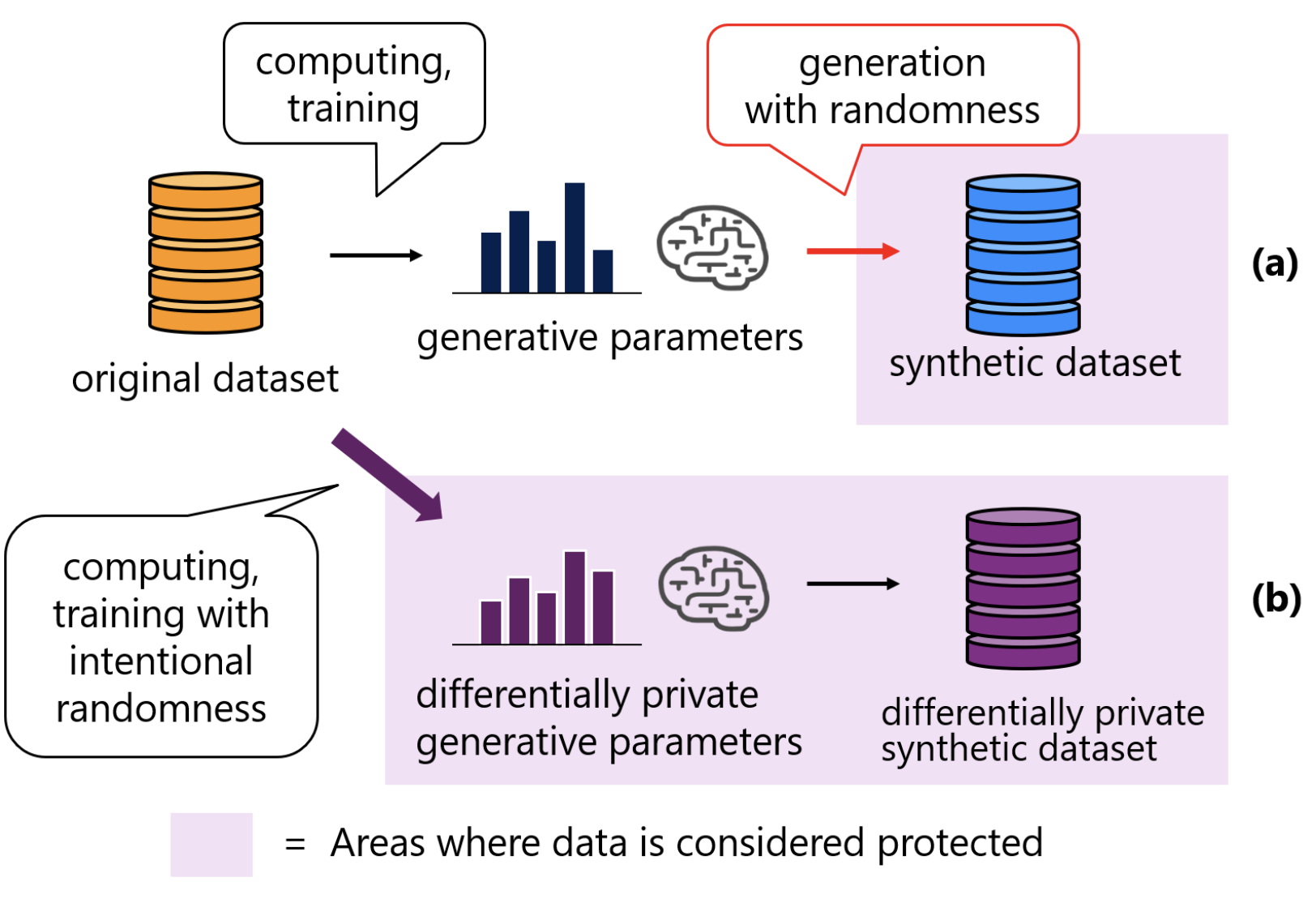

synthesizer training provides a mathematical guarantee that the trained model parameters, and any subsequent model outputs, are relatively unaffected by the addition, removal or change of any single user’s training examples. Unlike empirical or attack-based testing, DP’s protection does not rely on assumptions about attacker behavior.

Figure 3: Synthetic data generation without and with differential privacy

(DP). (a) Non-DP: a model learns patterns from real data to sample

synthetic records—often high utility but no formal privacy guarantee and

potential inference/memorization risks. (b) DP: training uses clipping and

calibrated noise (tuned to (ε,δ)), yielding a model and synthetic outputs

with a formal individual-level DP guarantee, typically with some utility

trade-off.

Image reference:

On Renyi Differential Privacy in Statistics-Based Synthetic Data Generation

The guarantee works by adding carefully calibrated randomness (noise) to the synthesizer’s training process measured by privacy parameters ε (epsilon) and δ (delta). Most practical deployments use (ε,δ)-DP where δ accounts for the small probability of privacy failure. Smaller ε values mean stronger privacy but typically lower utility. Intuitively, DP ensures that each person’s data has almost no impact on the final synthetic dataset, making it highly uncertain whether their information was included at all.

When a synthesizer protects privacy using DP while generating data, that process is differentially private synthetic data generation (DP-SDG).

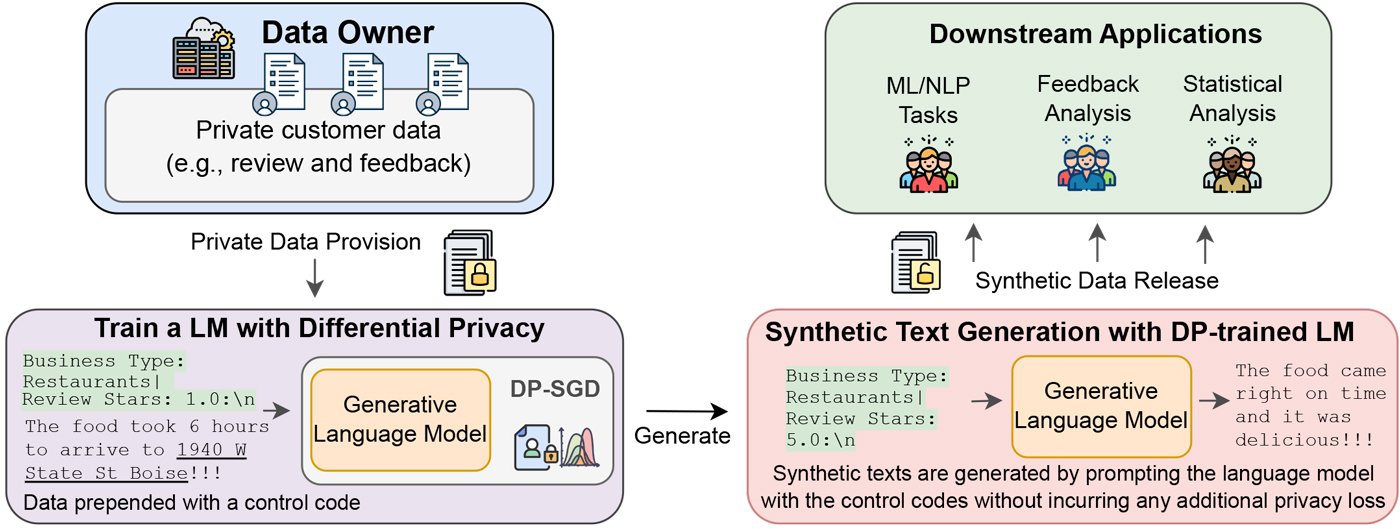

An Illustrative Example of Using DP for LLM Fine-tuning For Text Generation

Section titled “An Illustrative Example of Using DP for LLM Fine-tuning For Text Generation”

Figure 4: Researcher from Microsoft fine-tuned an LLM with DP on private

data corpus. The model can be used to generate synthetic examples that

resemble the private corpus.

Image reference:

Synthetic Text Generation with Differential Privacy: A Simple and Practical Recipe

Privacy isn’t a binary yes-or-no question—it’s about managing risk based on your specific context and threat model. Start with simple smoke tests to catch obvious issues, use attack-based evaluations for realistic threat assessment, and consider DP-SDG when you need formal guarantees with strong privacy protection.

The next chapter shifts focus to exploring practical synthesis considerations by taking a systematic, pipeline-driven approach that helps you generate useful synthetic data for your specific applications.

Footnotes

Section titled “Footnotes”-

Membership inference is trickier for data such as images, videos and audio. For e.g., even if a synthetic image is far away (in terms of some distance metric) from a real image, it could be perceptually similar, leaking the sensitive identity information. So “perceptual similarity” evaluation is critical for such data. ↩

-

In a recent empirical work, the authors systematically performed various attacks on synthetic data with differential privacy guarantees and demonstrated that it can still leak sensitive information. However, we argue that gaps in how differential privacy was applied may have contributed to these failures and then there is lack of details on the implementation. ↩