11. Outlook And Trends

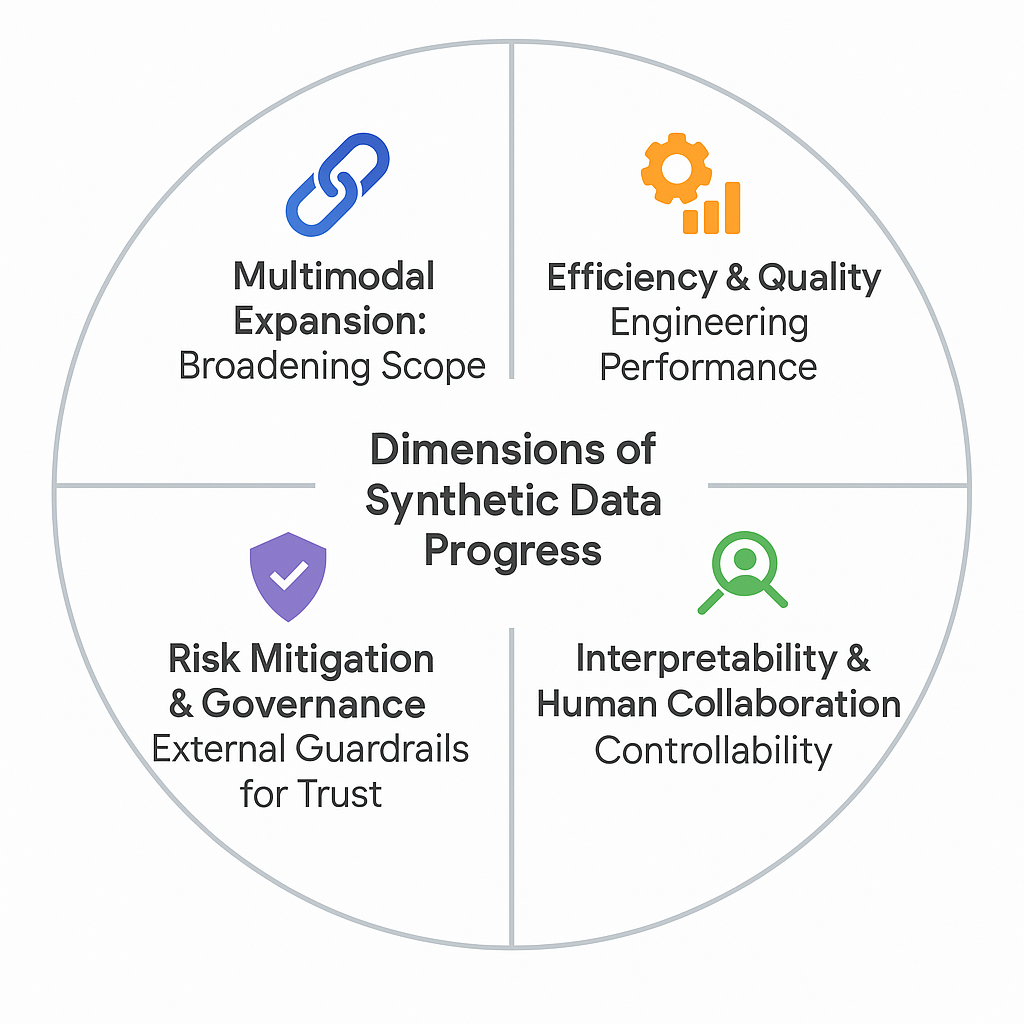

As synthetic data generation (SDG) matures from experimental technique to production-ready technology, the pressing question becomes: “can we generate better, more diverse (across modalities, domains, and scenarios), and safer data?” To frame this trajectory, we outline four dimensions: modality expansion, efficiency and quality, risk mitigation and governance, and interpretability and collaboration.

Figure: We define four key dimensions shaping the evolving landscape of

synthetic data.

1. Multimodal Expansion: Broadening Scope

Section titled “1. Multimodal Expansion: Broadening Scope”Synthetic data is moving from single modality or data type (e.g.,text, images, sensor data) toward richer ecosystems that combine or bridge modalities. This expansion allows AI systems to learn from more complex, realistic contexts.

- Multimodal: combining multiple modalities, e.g., X-Drive jointly generating LiDAR (point clouds) and camera images for autonomous driving.

- Cross-modal: using one modality to generate or enrich another — e.g., MAGID1 augments dialogue (text) datasets with synthetic images, while text corpora in low-resource languages can be expanded by creating paired images to improve translation tasks.

2. Efficiency & Quality: Engineering Performance

Section titled “2. Efficiency & Quality: Engineering Performance”Advances are aiming to make SDG cheaper, faster, and more realistic—while also pushing for stronger quality evaluation.

- Model progress: Diffusion and transformer-based models are now widely used for data synthesis, generally offering higher fidelity and stability compared to earlier GAN-based approaches.

- Cheaper LLM generation: LLM-based inference is showing a downward cost trend. For instance, text generation fell from roughly $60 to under $1 per million tokens (2022–2024), while image synthesis dropped from $0.06 to about $0.002 per image.

- SLMs on the rise: Smaller language models fine-tuned on real data are being adopted as efficient, domain-specific synthetic generators. They are typically cheaper to run, easier to deploy locally, and more straightforward to audit than large scale LLMs.

- Quality assurance: Research points to explainable AI for diagnosing divergences between synthetic and real data, alongside debiasing strategies that preserve not only distributions but also the reliability of insights drawn from them.

3. Risk Mitigation & Governance: External Guardrails for Trust

Section titled “3. Risk Mitigation & Governance: External Guardrails for Trust”While governments and organizations are increasingly adopting synthetic data to meet compliance and quality needs, new risks are emerging as its use expands — such as provenance laundering (misuse through stripped or forged watermarks), benchmark contamination (synthetic data leaking into evaluation sets), and model collapse (quality degradation from iterative self-training). These failure modes highlight the need for stronger external guardrails. Practitioners are also recognizing that adoption is not just a technical challenge — it is equally about compliance and trust

As noted in recent research, governance must not only minimize harm but also build trust and accountability into synthetic data pipelines. This requires balancing four key pillars:

- Effectiveness: policies that genuinely protect rights and fairness.

- Feasibility: standards that organizations of different sizes and capacities can realistically implement.

- Enforceability: mechanisms for audits, detection, and proportionate penalties.

- Adaptability: flexibility to keep pace with evolving generative methods and emerging risks.

4. Interpretability & Human Collaboration: Controllability

Section titled “4. Interpretability & Human Collaboration: Controllability”The focus is shifting from “can we generate?” to “do we understand and guide what we generate?” This reflects growing recognition that synthetic data pipelines must be transparent, explainable, and interactive with human expertise.

- Explainability: Generative models are often black boxes, but explainable AI tools are being used to make outputs interpretable. For instance, classifiers trained to separate real from synthetic data can be probed to reveal which features consistently diverge.

- Scaling laws: Research is mapping how downstream performance scales with synthetic data. Such studies help practitioners forecast when adding more synthetic data will yield significant gains, and when to shift focus toward improving quality.

- Human-in-the-loop: Fully automated generation can miss subtle errors or domain-specific nuances. Tools like INSPECTOR demonstrate that combining AI support with expert review can triple error detection compared to manual checks, blending automation with human judgment.

The trends and outlooks for synthetic data can be seen as dimensions, each answering a different question about the future: Where can it go? How do we make it work better? How do we keep it safe? And how do we understand and steer it? For practitioners and researchers, staying attuned to these directions is essential for responsible adoption and innovation.